I am a graduate student in Computer Science at the University of Electronic Science and Technology of China (UESTC), supervised by Prof. Xiaofeng Zhu. I received my Bachelor’s degree from UESTC in 2025.

My research interests include Few-Shot Learning, Large Language Models (LLMs), and Vision-Language Models (VLMs).

🔥 News

- 2025.09: 🌟🌟 I will go to UESTC to enjoy master life.

- 2024.08: 🎉🎉 One paper accepted by Applied Intelligence!

📝 Publications

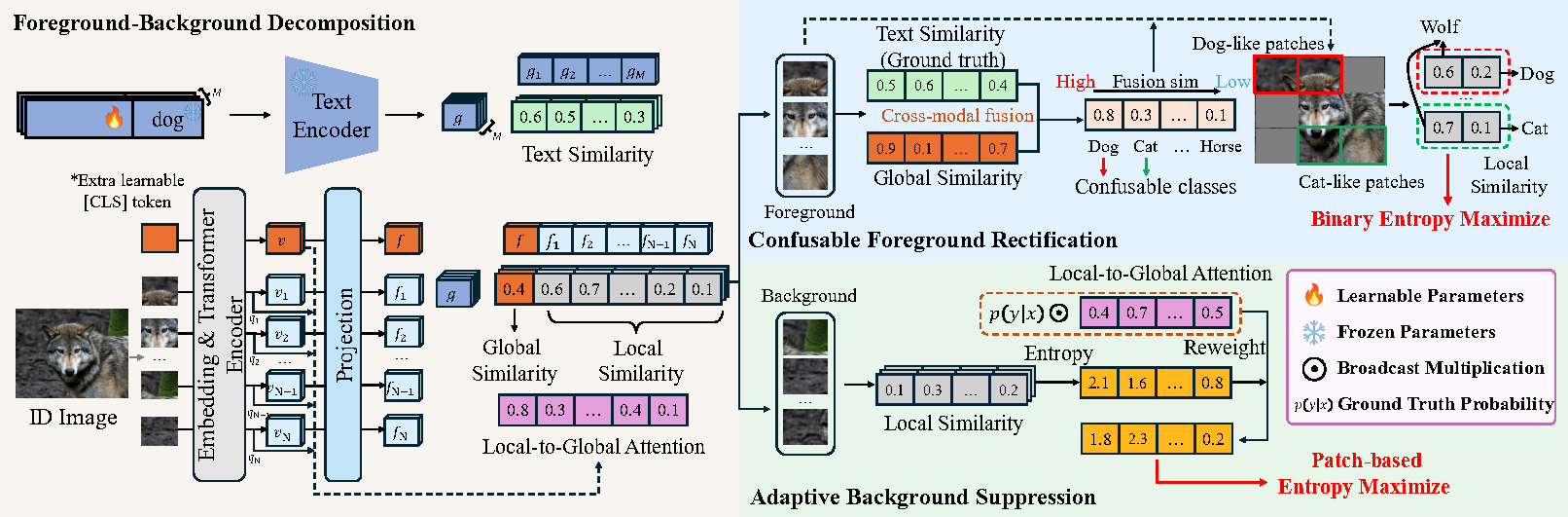

Enhancing Few-Shot Out-of-Distribution Detection via the Refinement of Foreground and Background

Tianyu Li, Songyue Cai, Zongqian Wu, Ping Hu, Xiaofeng Zhu

CLIP-based foreground-background (FG-BG) decomposition enhances few-shot OOD detection, yet existing methods suffer from uniform background suppression and the neglect of confusable foreground patches that mislead training. To address this, we propose a plug-and-play framework featuring Adaptive Background Suppression to weight patch importance and Confusable Foreground Rectification to handle semantic ambiguity. Extensive experiments demonstrate that our approach significantly improves the performance of existing FG-BG decomposition methods.

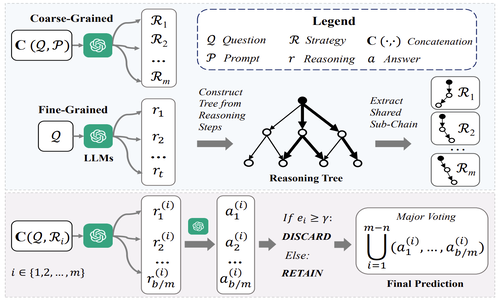

Mitigating Strategy-Selection Bias in Reasoning for More Effective Test-Time Scaling

Zongqian Wu, Baoduo Xu, Tianyu Li, Zhu Sun, Xiaofeng Zhu*, Lei Feng*

Test-time scaling (TTS) improves LLM performance by aggregating diverse paths, yet existing research overlooks the critical issue of strategy selection bias, where models favor certain strategies while neglecting valid alternatives. We present a theoretical analysis revealing how this bias undermines scaling effectiveness and introduce TTS-Uniform, a framework designed to mitigate it.

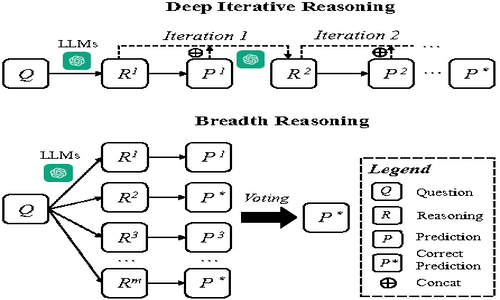

Is Depth All You Need? An Exploration of Iterative Reasoning in LLMsg

Zongqian Wu, Tianyu Li, Baoduo Xu, Jiaying Yang, Mengmeng Zhan, Xiaofeng Zhu, Lei Feng*

Deep iterative chain-of-thought (CoT) reasoning faces challenges in ensuring continual improvement and determining stopping criteria. In this paper, we demonstrate that breadth reasoning—increasing the diversity of initial reasoning paths—can effectively circumvent the need for iterative refinement by achieving comparable or superior performance. To address the limited diversity of existing approaches like self-consistency, we propose integrating contextual exploration with reduced sampling randomness, a method that significantly outperforms deep iterative reasoning.

🎖 Honors and Awards

- 2024.12: Xiaomi Scholarship.

- 2024.05: West region third prize in 2023 Competition of Service Outsourcing and Entrepreneurship Innovation.

- 2023.12: Excellent Student Scholarship, TCL scholarship, Yesun scholarship.

- 2023.08: National third prize in 2022 China Software Cup College Student Software Design Competition.

- 2022.12: Excellent Student Scholarship.

📖 Educations

- 2025.09 - Now (Master): UESTC

, Guiding Instructor: Prof. Xiaofeng Zhu, Team Instructors: Prof.Heng Tao Shen.

, Guiding Instructor: Prof. Xiaofeng Zhu, Team Instructors: Prof.Heng Tao Shen. - 2021.09 - 2025.06 (Bachelor): UESTC

, Software Engineering.

, Software Engineering.

💻 Internships

- 2025.04 - 2025.09, Bytedance

, China.

, China.